AI Is Busy Creating Adult Content. It Doesn’t Have Time To Steal Your Job

The rush for easy money is making sure that we don’t have to worry about AI replacing us in the workplace any time soon

A Better World Tomorrow is an entirely reader-supported publication and I would like to keep it accessible to all. However, if you read it regularly and appreciate the content, please consider becoming a paid subscriber. Or You can buy me a coffee to support my writing instead. And please consider sharing this article. It really helps spread the word.

One of the things I’m least worried about at the moment is the risk of losing my job to AI.

Yes, there are signs that, at some point, some jobs will no longer be needed. They will go the way of the cobbler and the cooper. And it’s true that the risk of being replaced is higher for women’s jobs.

But for now, AI tool developers seem to be focusing their energy on two things: creating unhelpful comments or text for social media for the next generation of “successful influencers.”

Or developing tools that make fake people have sex. And real people have fake sex.

In any case, these are the least useful things imaginable. But they also bring in the most money.

While the adult content AI industry is thriving, traditional companies are still struggling and often failing to find a genuine use case for AI.

Of course, everyone wants to be first on the AI train because they’re told this is the place to be. They spend a lot of money to hire data scientists and “AI specialists” to develop strategies but have no real idea what for.

As HRDive reports: “Organizations are beginning to realize that the practicalities of embedding AI into core business operations is far from simple,” Oliver Shaw, CEO of Orgvue, said in a statement.

And I can attest to that. A friend recently left a cushy data scientist job when she realized that the company had no strategy and no data hygiene and expected her to fix the mess.

Meanwhile, the AI porn side of the AI bubble is thriving.

Every AI image generation provider is fighting and failing to stop people from using their tools to create nonconsensual porn images. For some reason, porn is what people seem most eager to create with these tools.

Every time a new AI image creation engine pops on the market, someone immediately tries to exploit it for porn.

Just last month 404media reported that “Leonardo AI, an AI image generating platform that’s raised $31 million from investors including Samsung, is being used to generate nonconsensual sexual images of celebrities,”

Of course, Leonardo AI and similar sites try their best to prevent abuse. Simple requests to create AI porn usually no longer work.

But there are people constantly working on getting around the guardrails these companies have put in place.

One user in an infamous Telegram group reported that he had used Leonardo AI to create nude photos of Billy Eilish. And he also posted the customized prompts he used to get around the blocks.

So there’s still no way for women to protect themselves from random men creating nudes of them with AI.

The only difference is that we’ve now progressed from images to videos.

A few weeks ago, Google banned a face-swapping app from its store for advertising its ability to create non-consensual, AI-generated sex videos on websites dedicated to deepfake porn.

According to a five-star review, the app was “For NSFW AI lovers.”

Before Google removed it from the store, it was downloaded more than 10,000 times.

Apple, on the other hand, still allows face-swapping apps in its App Store, knowing full well what their dual use case is.

Even if you’re not actively creating an app or website that creates AI nude content, you can profit from the craze.

Creating AI images and videos requires a lot of computer power. Some companies will pay you to allow them to use your computer’s idle resources.

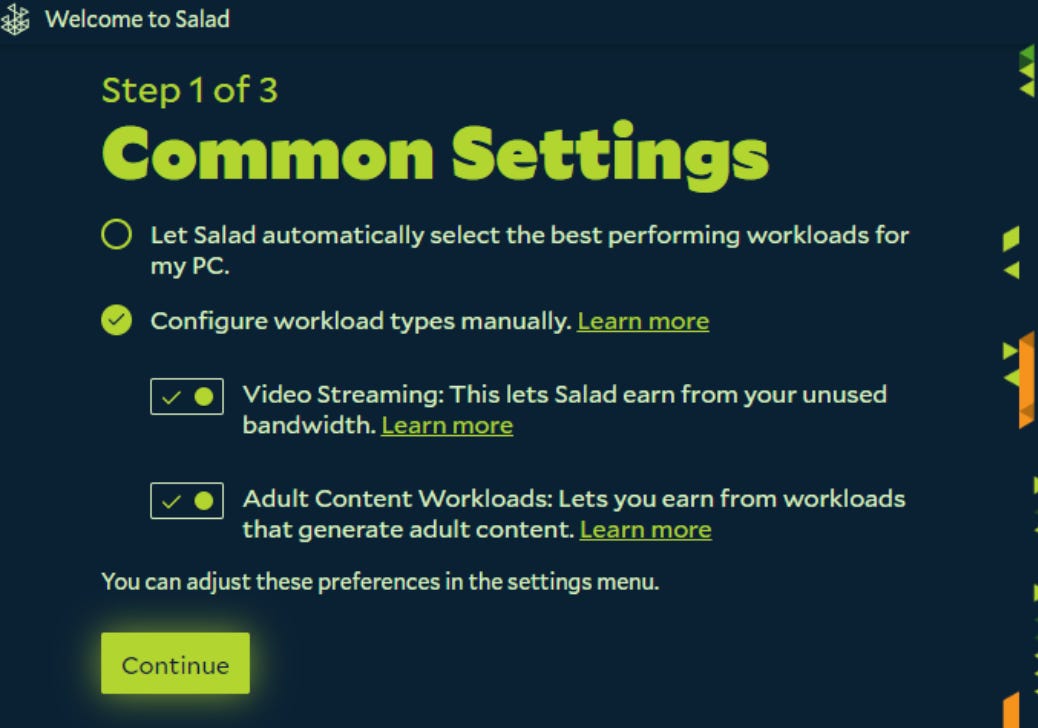

Salad — an “earn with your GPU (graphics processing unit)” company — will pay you with Roblox or Steam gift cards or give you Fortnite skins. If you’re not a gamer, you can be paid in PayPal or Spotify Credit.

All you have to do is allow AI companies to use your computer’s unused GPUs remotely.

Of course, there are use cases for this that are slightly less shady. Renting out your PC’s resources can be a legit way to make a little money on the side. For example, they might be used for mining crypto.

But, some of these companies will use your resources to create adult AI content.

If you don’t want that you have to explicitly say so.

Now, you might think, what’s the harm in creating imaginary nudes of people having or not having sex? No harm, no foul, right?

One of the biggest fouls with AI adult content is still the creation of nonconsensual AI porn and nudes. Often of celebrities, but also ordinary women.

We haven’t forgotten the big Taylor Swift nudes scandal, have we?

Nothing has changed despite the big uproar and big promises by clueless politicians.

Thanks to the media hype around artificial intelligence, a lot of people are worried about their job security.

Mainly because we have been given an exaggerated idea of what companies can do within the corporate context.

So, while most of us are not at immediate risk of losing our jobs, AI image and video creation and manipulation are the areas that are getting the most traction. And making the most money.

Surprisingly, among the few people whose livelihoods are currently threatened by AI are women working in the adult content industry.

For example, an AI clone of OnlyFans features only AI Influencers.

People running AI influencers' Instagram accounts are using real women’s bodies. They swap out adult content providers’ faces in videos and images for those of the AI influencers and profit from the traffic created.

At the moment, the risk of finding an AI-generated nude of ourselves on the internet is higher than losing our job to the LLMs.

On the surface, companies are trying to combat the pervasive use of their tools for AI sex content. But as one commenter said:

At this point it seems dumb to think this is an oversight or limitation.

This is the main purpose of these lame image generators and these companies know it.

What do you think Big Tech should do to stop the wave of nonconsensual AI nudes? Should these Apps be available in the Apple App Store?

If you’ve enjoyed my writing and want to support me, please share this story on social media or buy me a cup of coffee!

Please subscribe or restack; it really helps me visibility on this platform.

Great points, but AI did actually gobble up my day job, which was technical translation. Looks like I’m going to have to rely on music for a living! 🤘

So AI is doing none of the things humans wanted robots for, its doing the art, writing, pornography and even pretend fun of influencing.